When Your Calibrator Is Silently Zero

Dead components don't throw errors. They don't log warnings. They don't fire alerts. They return zero forever, and if zero is a semantically valid output, nothing in your monitoring stack will notice. Fire-rate tracking is the only defense.

A scoring component that is silently returning zero looks identical to a scoring component that is working correctly on markets with no edge. Your monitoring cannot tell you which one you have. Your tests cannot tell you which one you have. Your production logs cannot tell you which one you have. The only thing that can distinguish the two is fire-rate tracking — and most systems do not have it.

This is the story of one such component, how it killed a trading bot for weeks without anyone noticing, and the monitoring pattern that would have caught it on day one.

The Component

The calibrator was a 25-point contribution to a 100-point scoring rubric. Its job was to cross-reference a candidate market against two external probability sources and adjust the score upward when the sources broadly agreed on the market's likely outcome. On paper it was the system's reality check: a way to let external prediction markets weigh in on the model's conviction before capital followed.

It had unit tests. It had integration tests. It had code review. It passed every boundary condition the test author had thought to check. It was deployed alongside the other scoring components and it ran on every signal the engine produced.

It also, it turned out, returned exactly zero on 96.2% of all signals.

Why Zero

The calibrator's data sources were Metaculus and Manifold. Both are excellent forecasting platforms. Neither of them maintains broad coverage of the kind of politically granular markets the engine was scoring. On a typical signal, the calibrator would look up a candidate question, fail to find a semantic match on either platform, and return its default value of zero.

Zero was a semantically valid output. It meant "no calibration evidence available." The component was doing exactly what it was written to do. The problem was not that the calibrator was broken. The problem was that "no data available" and "calibrator is dead" produced identical behavior and identical log lines, and there was no metric in the system capable of telling them apart.

This is the definition of a silent failure: a component whose default output is valid, whose failure mode produces no exception, whose lack of contribution creates no alert, and whose role in the scoring rubric is load-bearing. When all four conditions are true simultaneously, the component is invisible to everything except the downstream effect of its absence.

The Load-Bearing Problem

The calibrator's 25 points were not decorative. They were structural. The scoring threshold was set at 70. Grok contributed up to 30. Perplexity contributed up to 30. News contributed up to 15. That is a maximum of 75 without the calibrator.

Work out the arithmetic. If the calibrator returns zero on 96.2% of signals, the ceiling on 96.2% of signals is not 100. It is 75. And the threshold sits at 70. That leaves a 5-point margin, which is effectively zero for any real scoring system with noise. The threshold of 70 out of 100 had quietly become a threshold of 70 out of 75 for almost every signal the engine ever evaluated.

Nothing had cleared it in three weeks.

Fire Rate As The Missing Metric

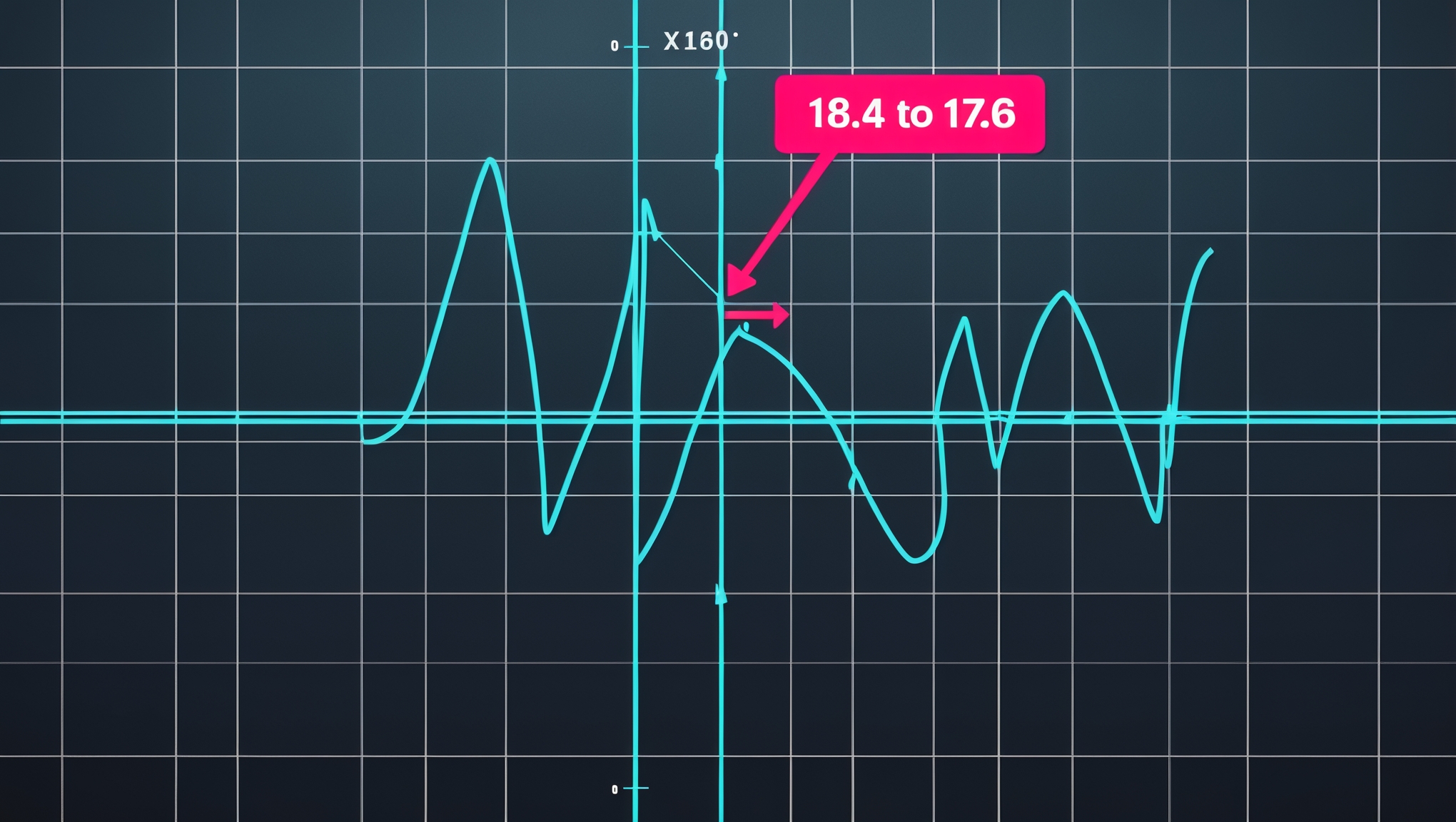

The fix was not a code change. The fix was a diagnostic change. One SQL query against stored component values, one fire rate per component, and the problem became obvious in a single row:

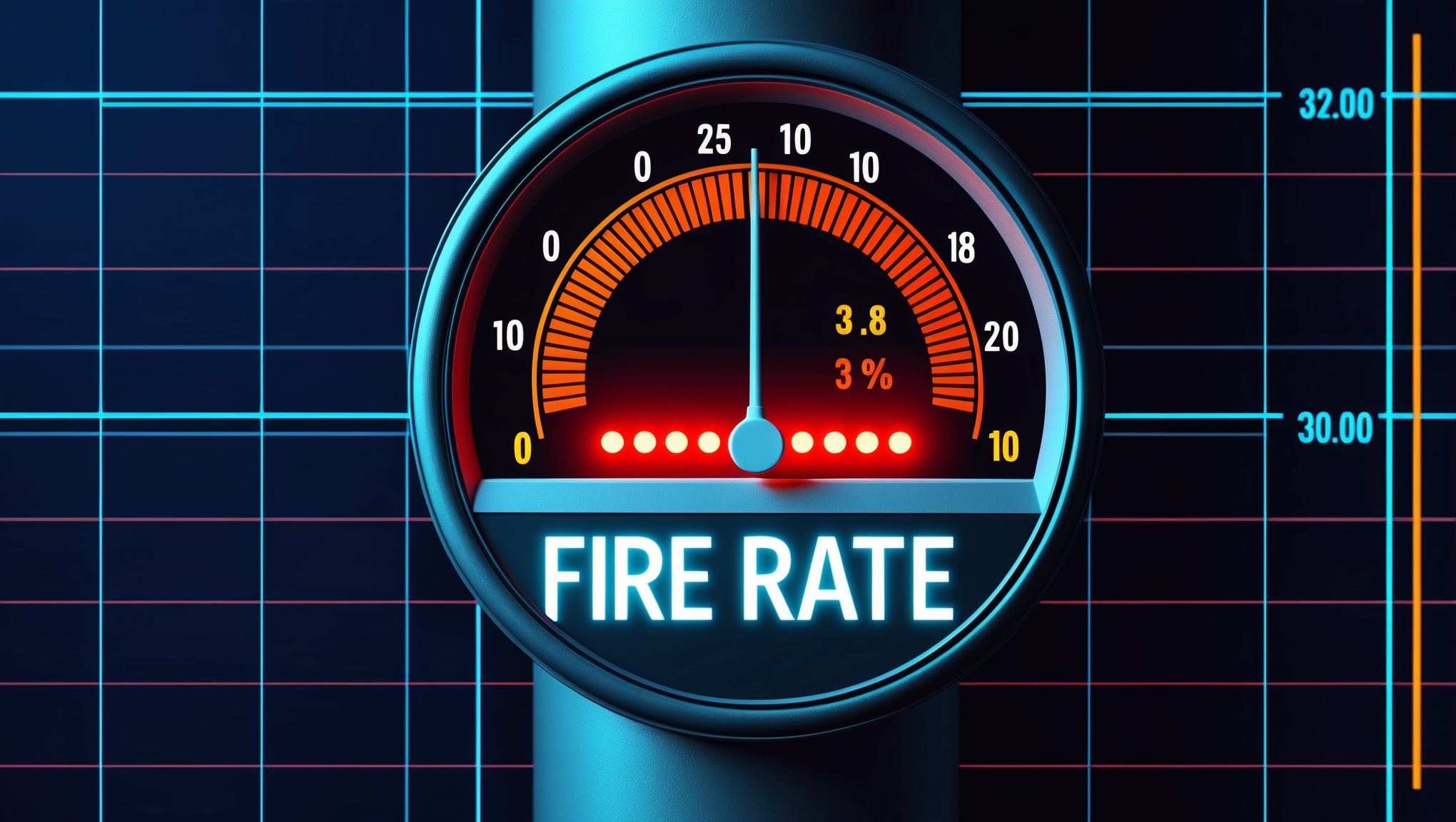

| Component | 24h Fire Rate | Max Contribution | | --- | --- | --- | | Grok | 94% | 30 | | Perplexity | 91% | 30 | | Calibrator | 3.8% | 25 | | News | 88% | 15 |

The calibrator's fire rate was 3.8%. Every other component was firing above 85%. The asymmetry is not subtle. Any human glancing at this table would have stopped and asked what was happening to the calibrator. No human had ever looked at this table because the table had never been produced. It took exactly one query to generate, and that query existed nowhere in the monitoring stack.

The lesson is that fire rate — defined as the percentage of signals on which a component returns a non-default, non-zero value — is the single most important metric for any scoring system that blends multiple evidence sources. It is not a nice-to-have. It is not a secondary dashboard. It is the only metric that can distinguish "component working correctly, no data available" from "component dead, no one has noticed for weeks." Those two states have the same behavior on a healthy day. They have catastrophically different behavior on the day you actually need the component.

The Rebalance Was Obvious Once The Diagnosis Was Right

Once the fire rate made the problem legible, the fix was arithmetic. We did not remove the calibrator — on the 3.8% of signals where it did fire, it still added real information. We redistributed its max contribution toward the components that were actually firing and reduced its ceiling to keep the total math honest. Grok went from 30 to 35. Perplexity went from 30 to 50. Calibrator went from 25 down to 20. News stayed at 15. Threshold stayed at 70.

The calibrator could still contribute. It just could no longer single-handedly pin the ceiling below the threshold. Signals started clearing the bar the same day.

The General Pattern

This failure mode is not unique to trading bots. Any scoring, ranking, or classification system that blends evidence from multiple sources is vulnerable to it. A content recommender where one embedding source silently returns zeros. A credit model where a feature extractor silently returns a default. A competitive intelligence pipeline where one enrichment API silently times out and returns null. If the failure mode does not throw, does not log, and does not alert, you will not notice it until the downstream output collapses — and by then you will have already shipped the broken behavior for weeks.

The pattern for defending against this is short and should be non-negotiable:

- Every scoring component emits a fire-rate metric — a rolling 24-hour count of signals on which the component returned a meaningful (non-default, non-zero) value, divided by total signals processed.

- Alert when fire rate drops below 20%. The exact threshold is not sacred. The principle is: a load-bearing component with a fire rate under 20% is almost certainly dead, not healthy-with-no-data.

- Never trust the absence of exceptions. Exceptions measure code integrity. Fire rate measures semantic integrity. They are not the same metric, and one cannot substitute for the other.

- Log the component values alongside the final score so that when a failure is suspected, a single SQL query can produce the diagnostic table above. You cannot retroactively investigate what you did not store.

This is the discipline the InDecision Framework now applies to every bot in the ecosystem. Component-level fire rates are emitted on every signal. An alert fires when any component drops below its expected rate. The cost of this monitoring is negligible. The cost of not having it was a three-week silent failure on a 340-test, 95%-coverage bot that looked healthy on every dashboard we had.

Silence is not health. If your calibrator is silently returning zero, your system is not quiet — it is broken, and you are the only one in the room who does not know yet.

Explore the Invictus Labs Ecosystem

Follow the Signal

Intelligence dispatches, system breakdowns, and strategic thinking — follow along before the mainstream catches on.